Fix:

===

a)

Open contrail_plugin.py

#vim neutron/plugins/opencontrail/contrail_plugin.py

b)

Add following statements at class level in class NeutronPluginContrailCoreV2

#patch VIF_TYPES

portbindings.__dict__['VIF_TYPE_VROUTER'] = 'vrouter'

portbindings.VIF_TYPES.append(portbindings.VIF_TYPE_VROUTER)

Example:

-----------------

class NeutronPluginContrailCoreV2(neutron_plugin_base_v2.NeutronPluginBaseV2,

securitygroup.SecurityGroupPluginBase,

portbindings_base.PortBindingBaseMixin,

external_net.External_net):

# patch VIF_TYPES

portbindings.__dict__['VIF_TYPE_VROUTER'] = 'vrouter'

portbindings.VIF_TYPES.append(portbindings.VIF_TYPE_VROUTER)

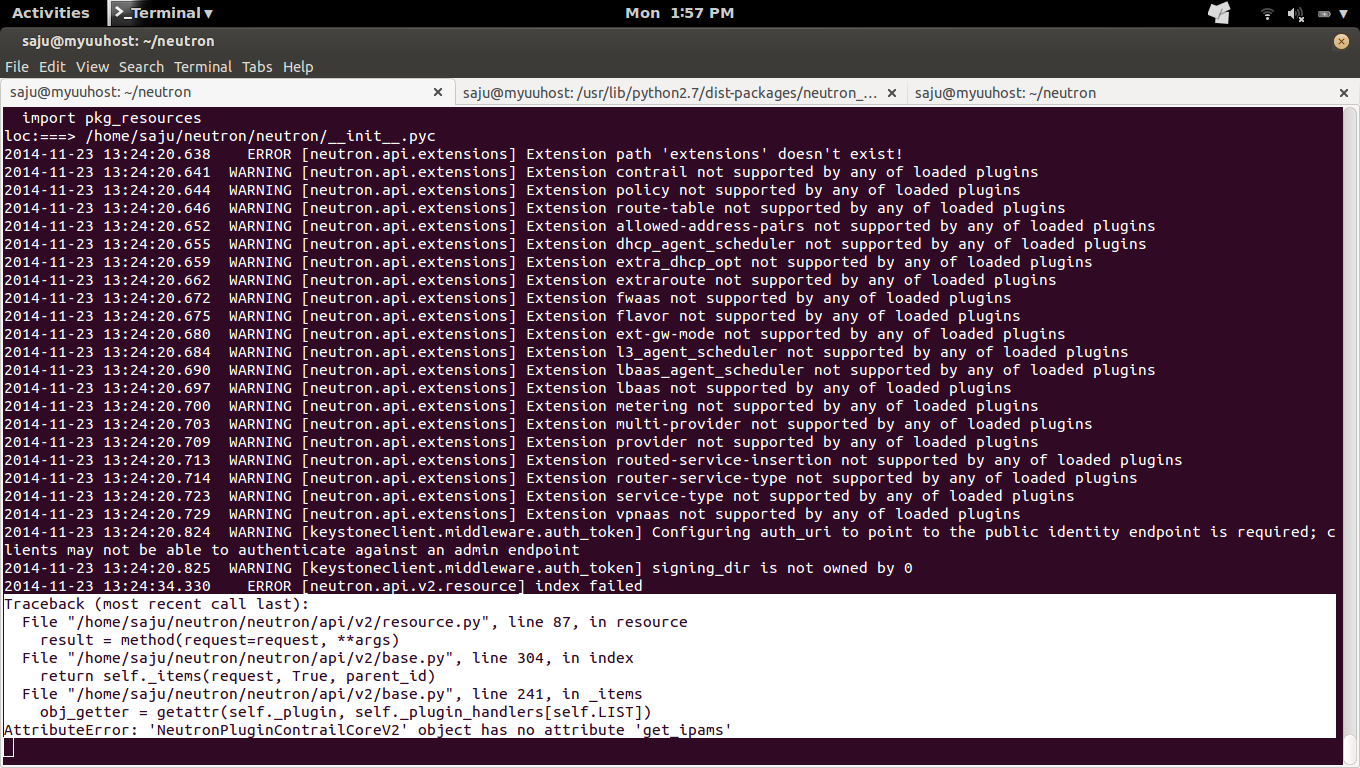

ERROR:

======

#sudo python neutron-server --config-file /etc/neutron/neutron.conf --log-file /var/log/neutron/server.log --config-file /etc/neutron/plugins/opencontrail/ContrailPlugin.ini

/usr/lib/python2.7/dist-packages/eventlet/hubs/__init__.py:8: UserWarning: Module neutron was already imported from /home/saju/neutron/neutron/__init__.pyc, but /usr/lib/python2.7/dist-packages is being added to sys.path

import pkg_resources

loc:===> /home/saju/neutron/neutron/__init__.pyc

2014-11-23 11:02:30.650 ERROR [neutron.service] Unrecoverable error: please check log for details.

Traceback (most recent call last):

File "/home/saju/neutron/neutron/service.py", line 105, in serve_wsgi

service.start()

File "/home/saju/neutron/neutron/service.py", line 74, in start

self.wsgi_app = _run_wsgi(self.app_name)

File "/home/saju/neutron/neutron/service.py", line 173, in _run_wsgi

app = config.load_paste_app(app_name)

File "/home/saju/neutron/neutron/common/config.py", line 170, in load_paste_app

app = deploy.loadapp("config:%s" % config_path, name=app_name)

File "/usr/lib/python2.7/dist-packages/paste/deploy/loadwsgi.py", line 247, in loadapp

return loadobj(APP, uri, name=name, **kw)

File "/usr/lib/python2.7/dist-packages/paste/deploy/loadwsgi.py", line 272, in loadobj

return context.create()

File "/usr/lib/python2.7/dist-packages/paste/deploy/loadwsgi.py", line 710, in create

return self.object_type.invoke(self)

File "/usr/lib/python2.7/dist-packages/paste/deploy/loadwsgi.py", line 144, in invoke

**context.local_conf)

File "/usr/lib/python2.7/dist-packages/paste/deploy/util.py", line 56, in fix_call

val = callable(*args, **kw)

File "/usr/lib/python2.7/dist-packages/paste/urlmap.py", line 25, in urlmap_factory

app = loader.get_app(app_name, global_conf=global_conf)

File "/usr/lib/python2.7/dist-packages/paste/deploy/loadwsgi.py", line 350, in get_app

name=name, global_conf=global_conf).create()

File "/usr/lib/python2.7/dist-packages/paste/deploy/loadwsgi.py", line 710, in create

return self.object_type.invoke(self)

File "/usr/lib/python2.7/dist-packages/paste/deploy/loadwsgi.py", line 144, in invoke

**context.local_conf)

File "/usr/lib/python2.7/dist-packages/paste/deploy/util.py", line 56, in fix_call

val = callable(*args, **kw)

File "/home/saju/neutron/neutron/auth.py", line 69, in pipeline_factory

app = loader.get_app(pipeline[-1])

File "/usr/lib/python2.7/dist-packages/paste/deploy/loadwsgi.py", line 350, in get_app

name=name, global_conf=global_conf).create()

File "/usr/lib/python2.7/dist-packages/paste/deploy/loadwsgi.py", line 710, in create

return self.object_type.invoke(self)

File "/usr/lib/python2.7/dist-packages/paste/deploy/loadwsgi.py", line 146, in invoke

return fix_call(context.object, context.global_conf, **context.local_conf)

File "/usr/lib/python2.7/dist-packages/paste/deploy/util.py", line 56, in fix_call

val = callable(*args, **kw)

File "/home/saju/neutron/neutron/api/v2/router.py", line 71, in factory

return cls(**local_config)

File "/home/saju/neutron/neutron/api/v2/router.py", line 75, in __init__

plugin = manager.NeutronManager.get_plugin()

File "/home/saju/neutron/neutron/manager.py", line 222, in get_plugin

return weakref.proxy(cls.get_instance().plugin)

File "/home/saju/neutron/neutron/manager.py", line 216, in get_instance

cls._create_instance()

File "/home/saju/neutron/neutron/openstack/common/lockutils.py", line 249, in inner

return f(*args, **kwargs)

File "/home/saju/neutron/neutron/manager.py", line 202, in _create_instance

cls._instance = cls()

File "/home/saju/neutron/neutron/manager.py", line 114, in __init__

plugin_provider)

File "/home/saju/neutron/neutron/manager.py", line 142, in _get_plugin_instance

return plugin_class()

File "/home/saju/neutron/neutron/plugins/opencontrail/contrail_plugin.py", line 72, in __init__

self.base_binding_dict = self._get_base_binding_dict()

File "/home/saju/neutron/neutron/plugins/opencontrail/contrail_plugin.py", line 78, in _get_base_binding_dict

portbindings.VIF_TYPE: portbindings.VIF_TYPE_VROUTER,

AttributeError: 'module' object has no attribute 'VIF_TYPE_VROUTER'

2014-11-23 11:02:30.657 CRITICAL [neutron] 'module' object has no attribute 'VIF_TYPE_VROUTER'